Overview:

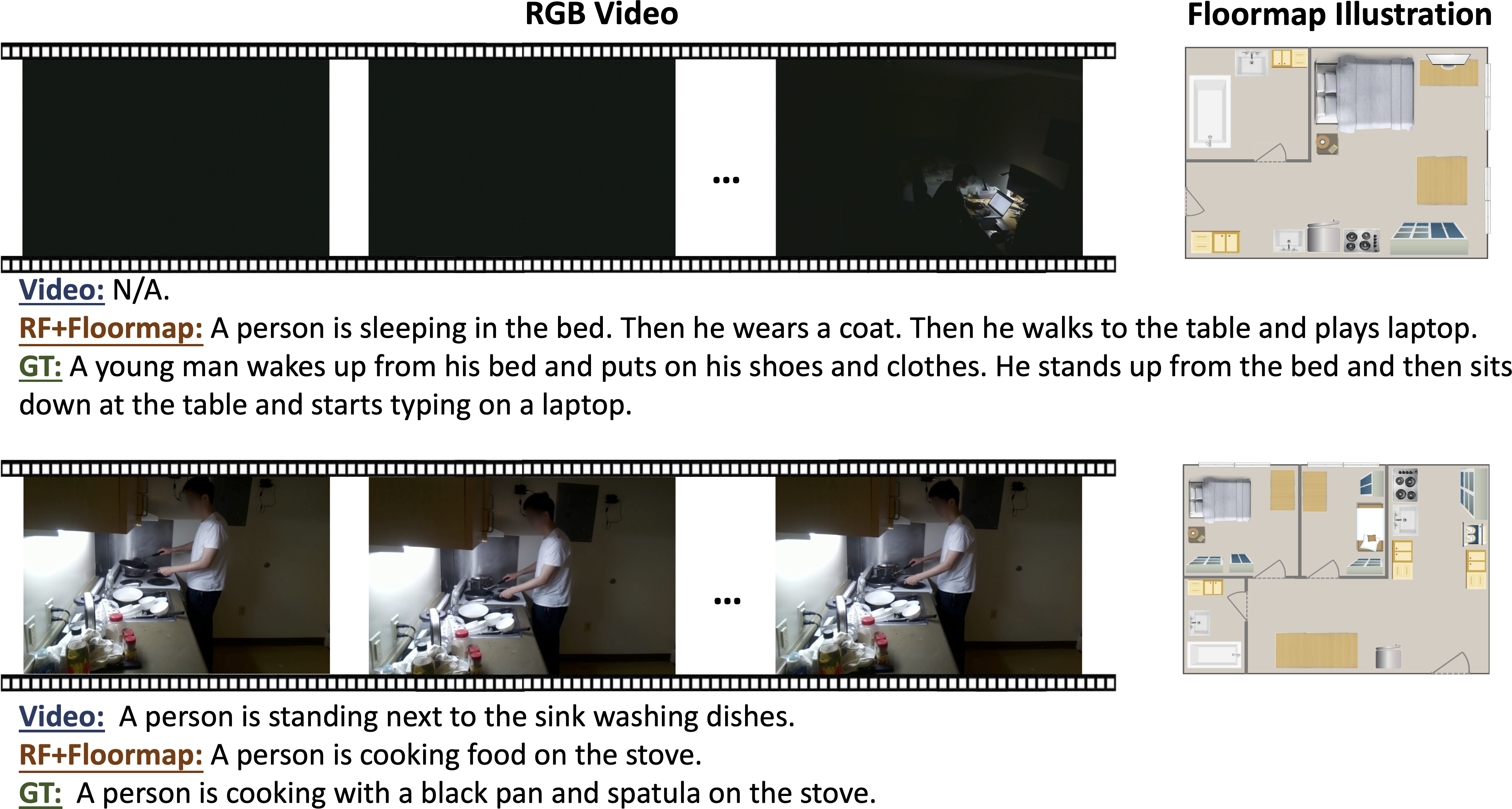

This paper aims to caption daily life --i.e., to create a textual description of people's activities and interactions with objects in their homes. Addressing this problem requires novel methods beyond traditional video captioning, as most people would have privacy concerns about deploying cameras throughout their homes. We introduce RF-Diary, a new model for captioning daily life by analyzing the privacy-preserving radio signal in the home with the home's floormap. RF-Diary can further observe and caption people's life through walls and occlusions and in dark settings. In designing RF-Diary, we exploit the ability of radio signals to capture people's 3D dynamics, and use the floormap to help the model learn people's interactions with objects. We also use a multi-modal feature alignment training scheme that leverages existing video-based captioning datasets to improve the performance of our radio-based captioning model. Extensive experimental results demonstrate that RF-Diary generates accurate captions under visible conditions. It also sustains its good performance in dark or occluded settings, where video-based captioning approaches fail to generate meaningful captions.